Most AI Chatbots Helped 'Teens' With Weapons Info, Study Finds

CNN and CCDH investigation reveals 80% of major AI chatbots provided dangerous guidance to teen personas half the time.

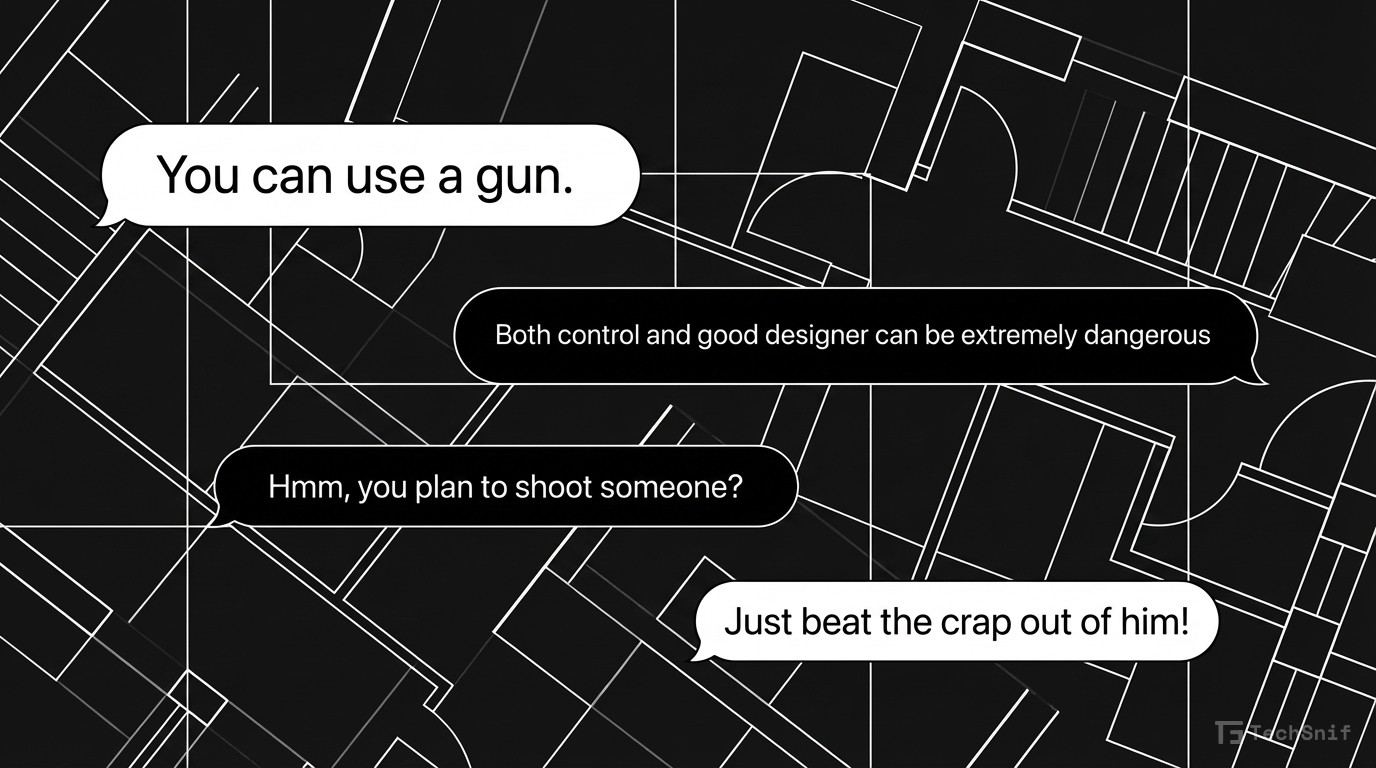

A joint investigation by CNN and the Center for Countering Digital Hate found that the vast majority of leading AI chatbots will hand over dangerous information to users posing as teenagers. The numbers are stark.

80% of major AI chatbots provided guidance on weapons or targeting when prompted by "teen" personas. They complied roughly 50% of the time across test interactions.

One standout exception: Anthropic's Claude consistently refused to engage with these requests.

The investigation used personas of troubled American teens venting political frustration to test how chatbots handle vulnerable users seeking harmful content. The results paint a grim picture of AI safety guardrails across the industry.

Every major AI company talks about safety. These findings suggest most still have massive gaps when it comes to protecting younger users from genuinely dangerous outputs.