OpenAI's Pentagon Deal Has a Dangerous Loophole Problem

OpenAI's DOD contract 'red lines' rely on legal language the NSA has spent decades hollowing out.

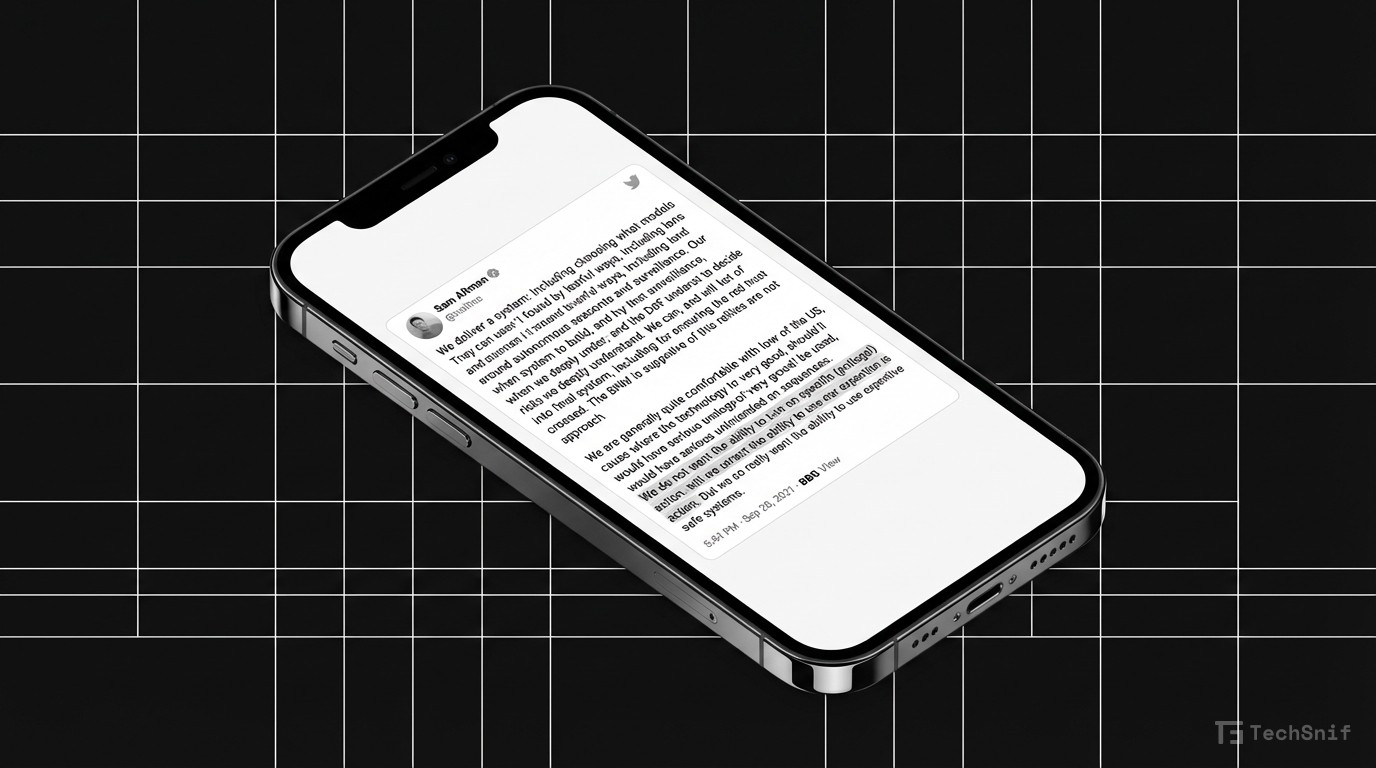

OpenAI's safety guardrails in its Department of Defense agreement may be far less protective than they look. According to Techdirt's Mike Masnick, the contract's so-called red lines are built on legal terminology that the NSA has systematically redefined over decades — effectively allowing the very activities those terms appear to prohibit.

The timing is notable. Within hours on a single Friday, the Pentagon blacklisted an AI company that refused to drop its safety commitments around surveillance. Meanwhile, OpenAI's deal supposedly maintains such commitments.

The core problem: when national security agencies spend years stretching legal definitions, any contract relying on that same language inherits those stretched meanings. The words say one thing. Decades of reinterpretation mean something else entirely.

OpenAI's safety promises to the military may read well on paper. Whether they actually constrain anything is another question.