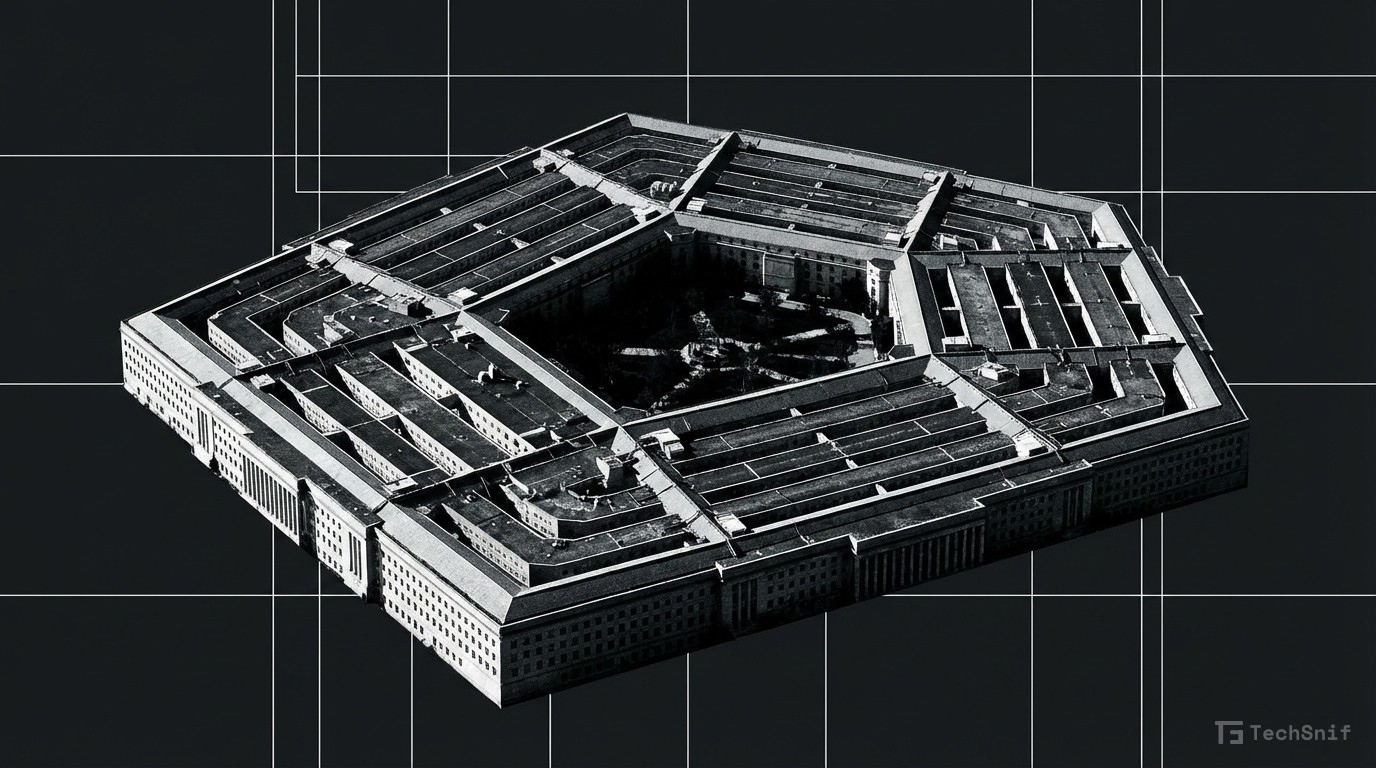

Pentagon and Anthropic Clash Over AI in Nuclear Scenarios

Tensions between the U.S. military and Anthropic boiled over after talks about deploying Claude in nuclear missile attack simulations.

Anthropic and the Pentagon are on a collision course over how the military can use artificial intelligence — specifically, whether AI should play a role in lethal decision-making.

The standoff escalated after discussions emerged about potentially using Anthropic's Claude model during hypothetical nuclear missile attack scenarios, according to sources cited by the Washington Post. That's about as high-stakes as AI deployment gets.

The core question is deceptively simple: should AI be allowed to kill? Anthropic, which has built its brand around AI safety, appears to be drawing a hard line. The Pentagon, unsurprisingly, wants more flexibility.

This isn't an abstract ethics debate anymore. It's a concrete standoff between one of the world's most powerful militaries and one of AI's most prominent safety-focused startups. The outcome could shape AI governance for years to come.